I wonder if one of the reasons they're so young is that's the age you'd have to be to not realize in how much legal trouble they might be putting themselves in. (Bar an eventual pardon from Trump.)

Myself I've learned to embrace the em dash—like so, with a special shoutout to John Green—and interleaving ( [ { } ] ).

On mac and linux conveniently short-cutted to Option+Shift+'-', windows is a much less satisfying Alt+0150 without third party tools like AutoHotKey.

Warmiest memory is our dad reading to me and my sisters (until we were quite old I think) , the Chronicles of Narnia, really going for the voice acting.

After wracking my brain trying to remember novels I read as kid and coming up blank, I finally remembered most of my early sci-fi books were in French, mostly aimed at middle-school aged kids but, gave me an early taste for sci-fi, and neat cover art to boot: https://www.noosfere.org/livres/collection.asp?NumCollection=-10246504&numediteur=3902

A few choice picks:

Les Abîmes d'Autremer: (rough translation: The Abysses of Othersea)

About a young journalist investigating the planet (Autremer) with the best spaceships (the Abîmes), which turn out to be retro-fitted space whales, that the pilot bonds to, and the journalist herself ends up as a pilot.

L' Œuil des Dieux: (The eye of the gods)

About a group of 29 kids who were left behind at a lunar station after a serious accident while there were still in kindergarten, (nobody knows they are still alive), told many years later the oldest ones now teenagers, when they don't really remember this (having been raised by a now defunct nanny robot) , and for them the station is the whole world, and they don't really understand technology, and are separated into three semi-hostile tribes tribes the Wolves, the Bears, and the Craze.

(Generation Ship sci-fi is still one of ny favorite genre, if I ever write a sci-fi book it would probably be in that setting)

L'Or Bleu: (Blue gold)

This one I don't remember that well and don't have at hand, but it follows a young man from saturn visiting Earth for the first time, in a world where water has become scarce, specifically in the Atlanpolis capital city of the now dry Mediterranean sea, Paris with a now dry Seine river, making his living by being very good at arcade virtual reality videogames with leaderboards (the book is originally from 1989), and uncovering machinations of those of have power and control of water.

Bonus Mention:

From a short story, from a collection I don't remember, but it follows a girl who is interupted in her life [while vacationing with her parents], is interrupted by the "Player" trying to complete his quest, and finding out that she's acutally an NPC, she's disturbingly realistic, and falling in love they do eventually complete the quest with a teary goodbye, and hopes that the virtual reality software the boy is using is actually tapping into parallel universes somehow. Cue the reveal, the girl was the Player all along, with slightly modified memories, for "enhanced" "immersion" and "excitement", she does not mourn or worry about the boy much at all, realizing he was the NPC after all.

L'imparfait du futur, une épatante aventure de Jules: (Future Imperfect, A spiffing adventure of Jules)

This one is a whole French comic book series (Bande-déssinée), which tells the story of Jules an unremarkable young man with average grades, who is selected for a space program for no greatly explained reason (It later turns out the chief scientist is a crazed eugenicist with really flawed software, trying to create "ideal" pairs), which actually explores the concept and consequence of light speed travel, and time dilation, something not properly explained to Jules, until "take off", cue a horrified face. When he does come back from alpha centauri in later installements, his younger brother is indeed now older than him. This book actually prompted me to ask in class in 2nd Grade for the teacher to please explain Relativity (in front of the whole class), and to his credit he actually gave it a fair shot, and didn't dismiss the question as not being context or age-appropriate.

[There's actually also lot of high quality french sci-fi comic books, generally intented for a more grown up audience.]

By Ursula K. Le Guin, I really like The dispossed and The left hand of darkness, and The Wind's Twelve Quarters (espcially the underground mathematicians measuring the distance to the face of God [the sun], one).

And done! well I had fun.

24! - Crossed Wires - Leaderboard time 01h01m13s (and a close personal time of 01h09m51s)

Spoilers

I liked this one! It was faster the solve part 2 semi-manually before doing it "programmaticly", which feels fun.

Way too many lines follow (but gives the option to finding swaps "manually"):

#!/usr/bin/env jq -n -crR -f

( # If solving manually input need --arg swaps

# Expected format --arg swaps 'n01-n02,n03-n04'

# Trigger start with --arg swaps '0-0'

if $ARGS.named.swaps then $ARGS.named.swaps |

split(",") | map(split("-") | {(.[0]):.[1]}, {(.[1]):.[0]}) | add

else {} end

) as $swaps |

[ inputs | select(test("->")) / " " | del(.[3]) ] as $gates |

[ # Defining Target Adder Circuit #

def pad: "0\(.)"[-2:];

(

[ "x00", "AND", "y00", "c00" ],

[ "x00", "XOR", "y00", "z00" ],

(

(range(1;45)|pad) as $i |

[ "x\($i)", "AND", "y\($i)", "c\($i)" ],

[ "x\($i)", "XOR", "y\($i)", "a\($i)" ]

)

),

(

["a01", "AND", "c00", "e01"],

["a01", "XOR", "c00", "z01"],

(

(range(2;45) | [. , . -1 | pad]) as [$i,$j] |

["a\($i)", "AND", "s\($j)", "e\($i)"],

["a\($i)", "XOR", "s\($j)", "z\($i)"]

)

),

(

(

(range(1;44)|pad) as $i |

["c\($i)", "OR", "e\($i)", "s\($i)"]

),

["c44", "OR", "e44", "z45"]

)

] as $target_circuit |

( # Re-order xi XOR yi wires so that xi comes first #

$gates | map(if .[0][0:1] == "y" then [.[2],.[1],.[0],.[3]] end)

) as $gates |

# Find swaps, mode=0 is automatic, mode>0 is manual #

def find_swaps($gates; $swaps; $mode): $gates as $old |

# Swap output wires #

( $gates | map(.[3] |= ($swaps[.] // .)) ) as $gates |

# First level: 'x0i AND y0i -> c0i' and 'x0i XOR y0i -> a0i' #

# Get candidate wire dict F, with reverse dict R #

( [ $gates[]

| select(.[0][0:1] == "x" )

| select(.[0:2] != ["x00", "XOR"] )

| if .[1] == "AND" then { "\(.[3])": "c\(.[0][1:])" }

elif .[1] == "XOR" then { "\(.[3])": "a\(.[0][1:])" }

else "Unexpected firt level op" | halt_error end

] | add

) as $F | ($F | with_entries({key:.value,value:.key})) as $R |

# Replace input and output wires with candidates #

( [ $gates[] | map($F[.] // .)

| if .[2] | test("c\\d") then [ .[2],.[1],.[0],.[3] ] end

| if .[2] | test("a\\d") then [ .[2],.[1],.[0],.[3] ] end

] # Makes sure that when possible a0i comes 1st, then c0i #

) as $gates |

# Second level: use info rich 'c0i OR e0i -> s0i' gates #

# Get candidate wire dict S, with reverse dict T #

( [ $gates[]

| select((.[0] | test("c\\d")) and .[1] == "OR" )

| {"\(.[2])": "e\(.[0][1:])"}, {"\(.[3])": "s\(.[0][1:])"}

] | add | with_entries(select(.key[0:1] != "z"))

) as $S | ($S | with_entries({key:.value,value:.key})) as $T |

( # Replace input and output wires with candidates #

[ $gates[] | map($S[.] // .) ] | sort_by(.[0][0:1]!="x",.)

) as $gates | # Ensure "canonical" order #

[ # Diff - our input gates only

$gates - $target_circuit

| .[] | [ . , map($R[.] // $T[.] // .) ]

] as $g |

[ # Diff + target circuit only

$target_circuit - $gates

| .[] | [ . , map($R[.] // $T[.] // .) ]

] as $c |

if $mode > 0 then

# Manual mode print current difference #

debug("gates", $g[], "target_circuit", $c[]) |

if $gates == $target_circuit then

$swaps | keys | join(",") # Output successful swaps #

else

"Difference remaining with target circuit!" | halt_error

end

else

# Automatic mode, recursion end #

if $gates == $target_circuit then

$swaps | keys | join(",") # Output successful swaps #

else

[

first(

# First case when only output wire is different

first(

[$g,$c|map(last)]

| combinations

| select(first[0:3] == last[0:3])

| map(last)

| select(all(.[]; test("e\\d")|not))

| select(.[0] != .[1])

| { (.[0]): .[1], (.[1]): .[0] }

),

# "Only" case where candidate a0i and c0i are in an

# incorrect input location.

# Might be more than one for other inputs.

first(

[

$g[] | select(

((.[0][0] | test("a\\d")) and .[0][1] == "OR") or

((.[0][0] | test("c\\d")) and .[0][1] == "XOR")

) | map(first)

]

| if length != 2 then

"More a0i-c0i swaps required" | halt_error

end

| map(last)

| select(.[0] != .[1])

| { (.[0]): .[1], (.[1]): .[0] }

)

)

] as [$pair] |

if $pair | not then

"Unexpected pair match failure!" | halt_error

else

find_swaps($old; $pair+$swaps; 0)

end

end

end

;

find_swaps($gates;$swaps;$swaps|length)

23!

Spoilerific

Got lucky on the max clique in part 2, my solution only works if there are at least 2 nodes in the clique, that only have the clique members as common neighbours.

Ended up reading wikipedia to lift one the Bron-Kerbosch methods:

#!/usr/bin/env jq -n -rR -f

reduce (

inputs / "-" # Build connections dictionary #

) as [$a,$b] ({}; .[$a] += [$b] | .[$b] += [$a]) | . as $conn |

# Allow Loose max clique check #

if $ARGS.named.loose == true then

# Only works if there is at least one pair in the max clique #

# That only have the clique members in common. #

[

# For pairs of connected nodes #

( $conn | keys[] ) as $a | $conn[$a][] as $b | select($a < $b) |

# Get the list of nodes in common #

[$a,$b] + ($conn[$a] - ($conn[$a]-$conn[$b])) | unique

]

# From largest size find the first where all the nodes in common #

# are interconnected -> all(connections ⋂ shared == shared) #

| sort_by(-length)

| first (

.[] | select( . as $cb |

[

$cb[] as $c

| ( [$c] + $conn[$c] | sort )

| ( . - ( . - $cb) ) | length

] | unique | length == 1

)

)

else # Do strict max clique check #

# Example of loose failure:

# 0-1 0-2 0-3 0-4 0-5 1-2 1-3 1-4 1-5

# 2-3 2-4 2-5 3-4 3-5 4-5 a-0 a-1 a-2

# a-3 b-2 b-3 b-4 b-5 c-0 c-1 c-4 c-5

def bron_kerbosch1($R; $P; $X; $cliques):

if ($P|length) == 0 and ($X|length) == 0 then

if ($R|length) > 2 then

{cliques: ($cliques + [$R|sort])}

end

else

reduce $P[] as $v ({$R,$P,$X,$cliques};

.cliques = bron_kerbosch1(

.R - [$v] + [$v] ; # R ∪ {v}

.P - (.P - $conn[$v]); # P ∩ neighbours(v)

.X - (.X - $conn[$v]); # X ∩ neighbours(v)

.cliques

) .cliques |

.P = (.P - [$v]) | # P ∖ {v}

.X = (.X - [$v] + [$v]) # X ∪ {v}

)

end

;

bron_kerbosch1([];$conn|keys;[];[]).cliques | max_by(length)

end

| join(",") # Output password

That's still mostly what it is ^^, though I'd say it's more "awk+sed" for JSON.

Hacky Manual parallelization

Massive time gains with parallelization + optimized next function (2x speedup) by doing the 3 xor operation in "one operation", Maybe I prefer the grids ^^:

#!/usr/bin/env jq -n -f

#────────────────── Big-endian to_bits ───────────────────#

def to_bits:

if . == 0 then [0] else { a: ., b: [] } | until (.a == 0;

.a /= 2 |

if .a == (.a|floor) then .b += [0]

else .b += [1] end | .a |= floor

) | .b end;

#────────────────── Big-endian from_bits ────────────────────────#

def from_bits: [ range(length) as $i | .[$i] * pow(2; $i) ] | add;

( # Get index that contribute to next xor operation.

def xor_index(a;b): [a, b] | transpose | map(add);

[ range(24) | [.] ]

| xor_index([range(6) | [-1]] + .[0:18] ; .[0:24])

| xor_index(.[5:29] ; .[0:24])

| xor_index([range(11) | [-1]] + .[0:13]; .[0:24])

| map(

sort | . as $indices | map(

select( . as $i |

$i >= 0 and ($indices|indices($i)|length) % 2 == 1

)

)

)

) as $next_ind |

# Optimized Next, doing XOR of indices simultaneously a 2x speedup #

def next: . as $in | $next_ind | map( [ $in[.[]] // 0 ] | add % 2 );

# Still slow, because of from_bits #

def to_price($p): $p | from_bits % 10;

# Option to run in parallel using xargs, Eg:

#

# seq 0 9 | \

# xargs -P 10 -n 1 -I {} bash -c './2024/jq/22-b.jq input.txt \

# --argjson s 10 --argjson i {} > out-{}.json'

# cat out-*.json | ./2024/jq/22-b.jq --argjson group true

# rm out-*.json

#

# Speedup from naive ~50m -> ~1m

def parallel: if $ARGS.named.s and $ARGS.named.i then

select(.key % $ARGS.named.s == $ARGS.named.i) else . end;

#════════════════════════════ X-GROUP ═══════════════════════════════#

if $ARGS.named.group then

# Group results from parallel run #

reduce inputs as $dic ({}; reduce (

$dic|to_entries[]

) as {key: $k, value: $v} (.; .[$k] += $v )

)

else

#════════════════════════════ X-BATCH ═══════════════════════════════#

reduce (

[ inputs ] | to_entries[] | parallel

) as { value: $in } ({}; debug($in) |

reduce range(2000) as $_ (

.curr = ($in|to_bits) | .p = to_price(.curr) | .d = [];

.curr |= next | to_price(.curr) as $p

| .d = (.d+[$p-.p])[-4:] | .p = $p # Four differences to price

| if .a["\($in)"]["\(.d)"]|not then # Record first price

.a["\($in)"]["\(.d)"] = $p end # For input x 4_diff

)

)

# Summarize expected bananas per 4_diff sequence #

| [ .a[] | to_entries[] ]

| group_by(.key)

| map({key: .[0].key, value: ([.[].value]|add)})

| from_entries

end |

#═══════════════════════════ X-FINALLY ══════════════════════════════#

if $ARGS.named.s | not then

# Output maximum expexted bananas #

to_entries | max_by(.value) | debug | .value

end

22!

spoilers!

Well it’s not a grid! My chosen language does not have bitwise operators so it’s a bit slow. Have to resort to manual parallelization.

EDIT: I have a sneaking suspicion that the computer will need to be re-used since the combo-operand 7 does not occur and is "reserved".

re p2

Also did this by hand to get my precious gold star, but then actually went back and implemented it Some JQ extension required:

#!/usr/bin/env jq -n -rR -f

#─────────── Big-endian to_bits and from_bits ────────────#

def to_bits:

if . == 0 then [0] else { a: ., b: [] } | until (.a == 0;

.a /= 2 |

if .a == (.a|floor) then .b += [0]

else .b += [1] end | .a |= floor

) | .b end;

def from_bits:

{ a: 0, b: ., l: length, i: 0 } | until (.i == .l;

.a += .b[.i] * pow(2;.i) | .i += 1

) | .a;

#──────────── Big-endian xor returns integer ─────────────#

def xor(a;b): [a, b] | transpose | map(add%2) | from_bits ;

[ inputs | scan("\\d+") | tonumber ] | .[3:] |= [.]

| . as [$A,$B,$C,$pgrm] |

# Assert #

if [first(

range(8) as $x |

range(8) as $y |

range(8) as $_ |

[

[2,4], # B = A mod 8 # Zi

[1,$x], # B = B xor x # = A[i*3:][0:3] xor x

[7,5], # C = A << B (w/ B < 8) # = A(i*3;3) xor x

[1,$y], # B = B xor y # Out[i]

[0,3], # A << 3 # = A(i*3+Zi;3) xor y

[4,$_], # B = B xor C # xor Zi

[5,5], # Output B mod 8 #

[3,0] # Loop while A > 0 # A(i*3;3) = Out[i]

] | select(flatten == $pgrm) # xor A(i*3+Zi;3)

)] == [] # xor constant

then "Reverse-engineering doesn't neccessarily apply!" | halt_error

end |

# When minimizing higher bits first, which should always produce #

# the final part of the program, we can recursively add lower bits #

# Since they are always stricly dependent on a #

# xor of Output x high bits #

def run($A):

# $A is now always a bit array #

# ┌──i is our shift offset for A #

{ p: 0, $A,$B,$C, i: 0} | until ($pgrm[.p] == null;

$pgrm[.p:.p+2] as [$op, $x] | # Op & literal operand

[0,1,2,3,.A,.B,.C,null][$x] as $y | # Op & combo operand

# From analysis all XOR operations can be limited to 3 bits #

# Op == 2 B is only read from A #

# Op == 5 Output is only from B (mod should not be required) #

if $op == 0 then .i += $y

elif $op == 1 then .B = xor(.B|to_bits[0:3]; $x|to_bits[0:3])

elif $op == 2

and $x == 4 then .B = (.A[.i:.i+3] | from_bits)

elif $op == 3

and (.A[.i:]|from_bits) != 0

then .p = ($x - 2)

elif $op == 3 then .

elif $op == 4 then .B = xor(.B|to_bits[0:3]; .C|to_bits[0:3])

elif $op == 5 then .out += [ $y % 8 ]

elif $op == 6 then .B = (.A[.i+$y:][0:3] | from_bits)

elif $op == 7 then .C = (.A[.i+$y:][0:3] | from_bits)

else "Unexpected op and x: \({$op,$x})" | halt_error

end | .p += 2

) | .out;

[ { A: [], i: 0 } | recurse (

# Keep all candidate A that produce the end of the program, #

# since not all will have valid low-bits for earlier parts. #

.A = ([0,1]|combinations(6)) + .A | # Prepend all 6bit combos #

select(run(.A) == $pgrm[-.i*2-2:] ) # Match pgrm from end 2x2 #

| .i += 1

# Keep only the full program matches, and convert back to int #

) | select(.i == ($pgrm|length/2)) | .A | from_bits

]

| min # From all valid self-replicating intputs output the lowest #

Re: p2

Definitely a bit tedious, I had to "play" a whole session to spot bugs that I had. It took me far longer than average. I had buggy dissepearing boxes because of update order, I would reccomend a basic test case of pushing a line/pyramid of boxes in every direction.

Updated Reasoning

Ok it probably works because it isn't bang center but a bit up of center, most other steps most be half half noise vertically, and the reason it doesn;t minimize on an earlier horizontal step (where every step is mostly half half), is because the middle points on the trunk, that don't contribute to the overall product therefore minimizing it even lower.

Day 14, got very lucky on this one, but too tired to think about why part 2 still worked.

spoiler

#!/usr/bin/env jq -n -R -f

# Board size # Our list of robots positions and speed #

[101,103] as [$W,$H] | [ inputs | [scan("-?\\d+")|tonumber] ] |

# Making the assumption that the easter egg occurs when #

# When the quandrant product is minimized #

def sig:

reduce .[] as [$x,$y] ([];

if $x < ($W/2|floor) and $y < ($H/2|floor) then

.[0] += 1

elif $x < ($W/2|floor) and $y > ($H/2|floor) then

.[1] += 1

elif $x > ($W/2|floor) and $y < ($H/2|floor) then

.[2] += 1

elif $x > ($W/2|floor) and $y > ($H/2|floor) then

.[3] += 1

end

) | .[0] * .[1] * .[2] * .[3];

# Only checking for up to W * H seconds #

# There might be more clever things to do, to first check #

# vertical and horizontal alignement separately #

reduce range($W*$H) as $s ({ b: ., bmin: ., min: sig, smin: 0};

.b |= (map(.[2:4] as $v | .[0:2] |= (

[.,[$W,$H],$v] | transpose | map(add)

| .[0] %= $W | .[1] %= $H

)))

| (.b|sig) as $sig |

if $sig < .min then

.min = $sig | .bmin = .b | .smin = $s

end | debug($s)

)

| debug(

# Contrary to original hypothesis that the easter egg #

# happens in one of the quandrants, it occurs almost bang #

# in the center, but this is still somehow the min product #

reduce .bmin[] as [$x,$y] ([range($H)| [range($W)| " "]];

.[$y][$x] = "█"

) |

.[] | add

)

| .smin + 1 # Our easter egg step

And a bonus tree:

I liked day 13, a bit easy but in the right way.

Edit:

Spoilers

Although saying "minimum" was a bit evil when all of the systems had exactly 1 solution (not necessarily in ℕ^2), I wonder if it's puzzle trickiness, anti-LLM (and unfortunate non comp-sci souls) trickiness or if the puzzle was maybe scaled down from a version where there are more solutions.

re:followup

If you somehow wanted your whole final array it would also require over 1 Peta byte ^^, memoization definetely reccomended.

Day 11

Some hacking required to make JQ work on part 2 for this one.

Part 1, bruteforce blessedly short

#!/usr/bin/env jq -n -f

last(limit(1+25;

[inputs] | recurse(map(

if . == 0 then 1 elif (tostring | length%2 == 1) then .*2024 else

tostring | .[:length/2], .[length/2:] | tonumber

end

))

)|length)

Part 2, some assembly required, batteries not included

#!/usr/bin/env jq -n -f

reduce (inputs|[.,0]) as [$n,$d] ({}; debug({$n,$d,result}) |

def next($n;$d): # Get next # n: number, d: depth #

if $d == 75 then 1

elif $n == 0 then [1 ,($d+1)]

elif ($n|tostring|length%2) == 1 then [($n * 2024),($d+1)]

else # Two new numbers when number of digits is even #

$n|tostring| .[0:length/2], .[length/2:] | [tonumber,$d+1]

end;

# Push onto call stack #

.call = [[$n,$d,[next($n;$d)]], "break"] |

last(label $out | foreach range(1e9) as $_ (.;

# until/while will blow up recursion #

# Using last-foreach-break pattern #

if .call[0] == "break" then break $out

elif

all( # If all next calls are memoized #

.call[0][2][] as $next

| .memo["\($next)"] or ($next|type=="number"); .

)

then

.memo["\(.call[0][0:2])"] = ([ # #

.call[0][2][] as $next # Memoize result #

| .memo["\($next)"] // $next # #

] | add ) | .call = .call[1:] # Pop call stack #

else

# Push non-memoized results onto call stack #

reduce .call[0][2][] as [$n,$d] (.;

.call = [[$n,$d, [next($n;$d)]]] + .call

)

end

))

# Output final sum from items at depth 0

| .result = .result + .memo["\([$n,0])"]

) | .result

I remember being quite ticked off by her takes about free will, and specifically severly misrepresenting compatibilism and calling philosphers stupid for coming up with the idea.

One look day 9 and I had to leave it until after work (puzzles unlock at 2PM for me), it wasn't THAT hard, but I had to leave it until later.

re:10

Mwahaha I'm just lazy and did are "unique" (single word dropped for part 2) of start/end pairs.

#!/usr/bin/env jq -n -R -f

([

inputs/ "" | map(tonumber? // -1) | to_entries

] | to_entries | map( # '.' = -1 for handling examples #

.key as $y | .value[]

| .key as $x | .value | { "\([$x,$y])":[[$x,$y],.] }

)|add) as $grid | # Get indexed grid #

[

($grid[]|select(last==0)) | [.] | # Start from every '0' head

recurse( #

.[-1][1] as $l | # Get altitude of current trail

( #

.[-1][0] #

| ( .[0] = (.[0] + (1,-1)) ), #

( .[1] = (.[1] + (1,-1)) ) #

) as $np | # Get all possible +1 steps

if $grid["\($np)"][1] != $l + 1 then

empty # Drop path if invalid

else #

. += [ $grid["\($np)"] ] # Build path if valid

end #

) | select(last[1]==9) # Only keep complete trails

| . |= [first,last] # Only Keep start/end

]

# Get score = sum of unique start/end pairs.

| group_by(first) | map(unique|length) | add

Stubsack: weekly thread for sneers not worth an entire post, week ending Sunday 15 September 2024

Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

> The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be) > > Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Semi-obligatory thanks to @dgerard for starting this)

AGI Sparklings proponents rejoice! Finding a literal map(*) means LLMs have a world model.

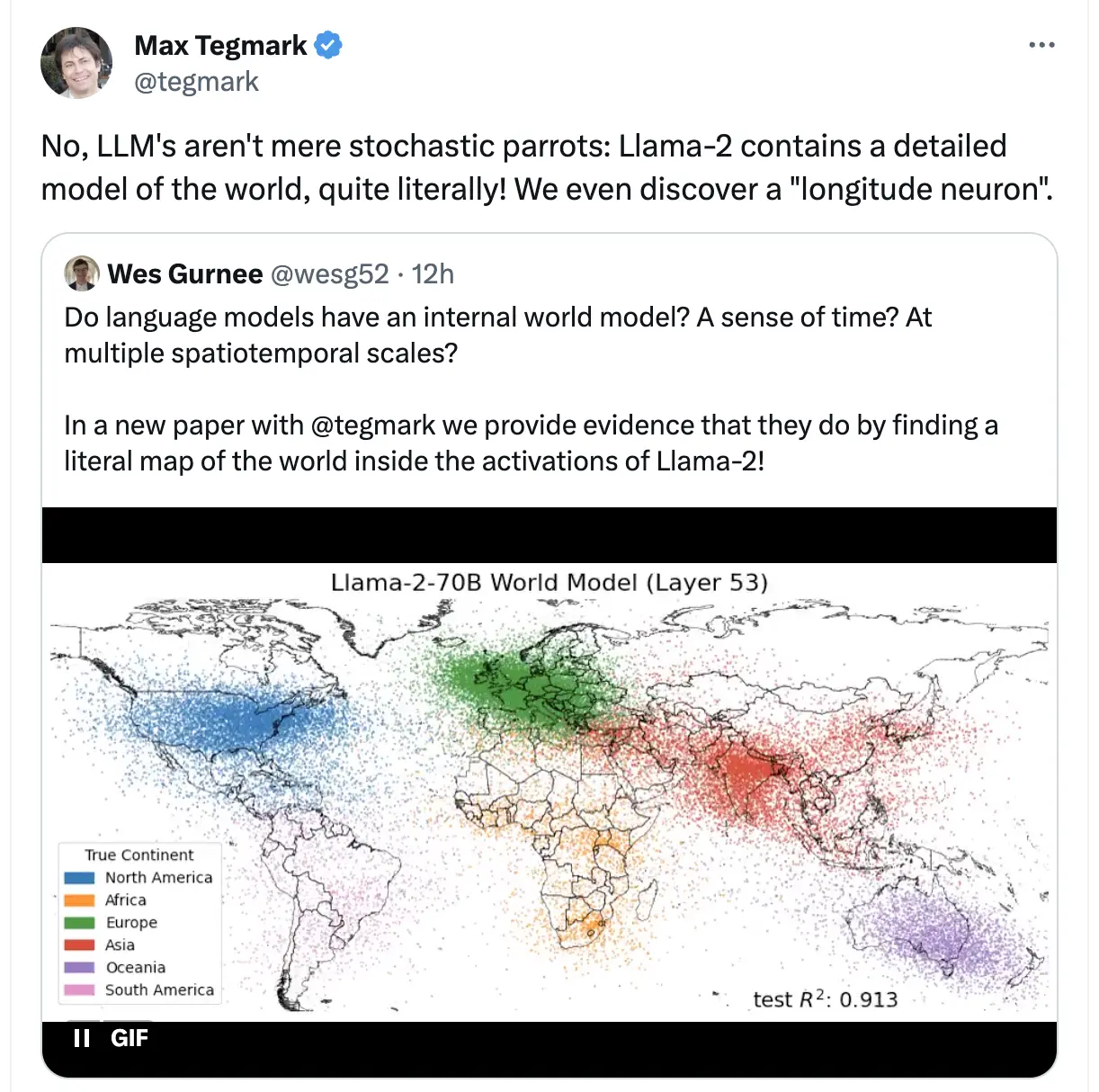

Transcribed: > Max Tegmark (@tegmark): > No, LLM's aren't mere stochastic parrots: Llama-2 contains a detailed model of the world, quite literally! We even discover a "longitude neuron" >> Wes Gurnee (@wesg52): >> Do language models have an internal world model? A sense of time? At multiple spatiotemporal scales? >> In a new paper with @tegmark we provide evidence that they do by finding a literal map of the world inside the activations of Llama-2! >> [image with colorful dots on a map]

__________________________________________________________________ With this dastardly deliberate simplification of what it means to have a world model, we've been struck a mortal blow in our skepticism towards LLMs; we have no choice but to convert surely!

(*) Asterisk: Not an actual literal map, what they really mean to say is that they've trained "linear probes" (it's own mini-model) on the activation layers, for a bunch of inputs, and minimizing loss for latitude and longitude (and/or time, blah blah).

And yes from the activations you can get a fuzzy distribution of lat,long on a map, and yes they've been able to isolated individual "neurons" that seem to correlate in activation with latitude and longitude. (frankly not being able to find one would have been surprising to me, this doesn't mean LLM's aren't just big statistical machines, in this case being trained with data containing literal lat,long tuples for cities in particular)

It's a neat visualization and result but it is sort of comically missing the point

__________________________________________________________________

Bonus sneers from @emilymbender:

- You know what's most striking about this graphic? It's not that mentions of people/cities/etc from different continents cluster together in terms of word co-occurrences. It's just how sparse the data from the Global South are. -- Also, no, that's not what "world model" means if you're talking about the relevance of world models to language understanding. (source)

- "We can overlay it on a map" != "world model" (source)

Humble EY can move goalposts in long format.

With interspaced sneerious rephrasing:

> In the close vicinity of sorta-maybe-human-level general-ish AI, there may not be any sharp border between levels of increasing generality, or any objectively correct place to call it AGI. Any process is continuous if you zoom in close enough.

The profound mysteries of reality carving, means I get to move the goalposts as much as I want. Besides I need to re-iterate now that the foompocalypse is imminent!

> Unless, empirically, somewhere along the line there's a cascade of related abilities snowballing. In which case we will then say, post facto, that there's a jump to hyperspace which happens at that point; and we'll probably call that "the threshold of AGI", after the fact.

I can't prove this, but it's the central tenet of my faith, we will recognize the face of god when we see it. I regret that our hindsight 20-20 event is so conveniently inconveniently placed in the future, the bad one no less.

> Theory doesn't predict-with-certainty that any such jump happens for AIs short of superhuman.

See how much authority I have, it is not "My Theory" it is "The Theory", I have stared into the abyss and it peered back and marked me as its prophet.

> If you zoom out on an evolutionary scale, that sort of capability jump empirically happened with humans--suddenly popping out writing and shortly after spaceships, in a tiny fragment of evolutionary time, without much further scaling of their brains.

The forward arrow of Progress™ is inevitable! S-curves don't exist! The y-axis is practically infinite! We should extrapolate only from the past (eugenically scaled certainly) century! Almost 10 000 years of written history, and millions of years of unwritten history for the human family counts for nothing!

> I don't know a theoretically inevitable reason to predict certainly that some sharp jump like that happens with LLM scaling at a point before the world ends. There obviously could be a cascade like that for all I currently know; and there could also be a theoretical insight which would make that prediction obviously necessary. It's just that I don't have any such knowledge myself.

I know the AI god is a NeCeSSarY outcome, I'm not sure where to plant the goalposts for LLM's and still be taken seriously. See how humble I am for admitting fallibility on this specific topic.

> Absent that sort of human-style sudden capability jump, we may instead see an increasingly complicated debate about "how general is the latest AI exactly" and then "is this AI as general as a human yet", which--if all hell doesn't break loose at some earlier point--softly shifts over to "is this AI smarter and more general than the average human". The world didn't end when John von Neumann came along--albeit only one of him, running at a human speed.

Let me vaguely echo some of my beliefs:

- History is driven by great men (of which I must be, but cannot so openly say), see our dearest elevated and canonized von Neumann.

- JvN was so much above the average plebeian man (IQ and eugenics good?) and the AI god will be greater.

- The greatest single entity/man will be the epitome of Intelligence™, breaking the wheel of history.

> There isn't any objective fact about whether or not GPT-4 is a dumber-than-human "Artificial General Intelligence"; just a question of where you draw an arbitrary line about using the word "AGI". Albeit that itself is a drastically different state of affairs than in 2018, when there was no reasonable doubt that no publicly known program on the planet was worthy of being called an Artificial General Intelligence.

No no no, General (or Super) Intelligence is not an completely un-scoped metric. Again it is merely a fuzzy boundary where I will be able to arbitrarily move the goalposts while being able to claim my opponents are!

> We're now in the era where whether or not you call the current best stuff "AGI" is a question of definitions and taste. The world may or may not end abruptly before we reach a phase where only the evidence-oblivious are refusing to call publicly-demonstrated models "AGI".

Purity-testing ahoy, you will be instructed to say shibboleth three times and present your Asherah poles for inspection. Do these mean unbelievers not see these N-rays as I do ? What do you mean we have (or almost have, I don't want to be too easily dismissed) is not evidence of sparks of intelligence?

> All of this is to say that you should probably ignore attempts to say (or deniably hint) "We achieved AGI!" about the next round of capability gains.

Wasn't Sam the Altman so recently cheeky? He'll ruin my grift!

> I model that this is partially trying to grab hype, and mostly trying to pull a false fire alarm in hopes of replacing hostile legislation with confusion. After all, if current tech is already "AGI", future tech couldn't be any worse or more dangerous than that, right? Why, there doesn't even exist any coherent concern you could talk about, once the word "AGI" only refers to things that you're already doing!

Again I reserve the right to remain arbitrarily alarmist to maintain my doom cult.

> Pulling the AGI alarm could be appropriate if a research group saw a sudden cascade of sharply increased capabilities feeding into each other, whose result was unmistakeably human-general to anyone with eyes.

Observing intelligence is famously something eyes are SufFicIent for! No this is not my implied racist, judge someone by the color of their skin, values seeping through.

> If that hasn't happened, though, deniably crying "AGI!" should be most obviously interpreted as enemy action to promote confusion; under the cover of selfishly grabbing for hype; as carried out based on carefully blind political instincts that wordlessly notice the benefit to themselves of their 'jokes' or 'choice of terminology' without there being allowed to be a conscious plan about that.

See Unbelievers! I can also detect the currents of misleading hype, I am no buffoon, only these hypesters are not undermining your concerns, they are undermining mine: namely damaging our ability to appear serious and recruit new cult members.

If learning incorrect things is EY's only definition of trauma, his existence must be eternal torment.

> @EY > This advice won't be for everyone, but: anytime you're tempted to say "I was traumatized by X", try reframing this in your internal dialogue as "After X, my brain incorrectly learned that Y".

I have to admit, for a brief moment i thought he was correctly expressing displeasure at twitter.

> @EY > This is of course a dangerous sort of tweet, but I predict that including variables into it will keep out the worst of the online riff-raff - the would-be bullies will correctly predict that their audiences' eyes would glaze over on reading a QT with variables.

Fool! This bully (is it weird to speak in the third person ?) thinks using variables here makes it MORE sneer worthy, especially since this appear to be a general advice, but i would struggle to think of a single instance in my life where it's been applicable.

Eliezer reveals his inner chūnibyō and inability to do math.

> @ESYudkowsky: > Remember when you were a kid and thought you might have psychic powers, so you dealt yourself face-down playing cards and tried to guess whether they were red or black, and recorded your accuracy rate over several batches of tries?

|

> And then remember how you had absolutely no idea to do stats at that age, so you stayed confused for a while longer? -----------------------------------------------------------------------

Apologies for the usage of the japanese; but it is a very apt description: https://en.wikipedia.org/wiki/Chūnibyō,

The atom bomb was summoned by wizards and pure thought.

And of course no experiments whatsoever, the cost of the Manhattan project, the hundreds of thousands of employees were merely a "focusing" magick, a sacrifice to re-enforce the greater powers of our handful of esteemed and glorious thinking men, who wrought the power of destruction from the æther.

> @ESYudkowsky: > Yes, but because the first nuclear weapon makers knew what the duck they were doing - analytic precise prediction of desired outcomes and of each intervening step. AGI makers lack similar mastery or anything remotely close, and have a much harder problem; that's the big issue. >> @EigenGender: >> seems pretty noteworthy that the first nuclear weapons were made under conditions where they couldn’t do any experiments and they involved a lot of math but still worked on the first try.