Seemed verbose, overengineered, unnecessary framework introducing complexity. I didn't see a strong use case for it, maybe for a lack of an obvious one or my understanding of it.

It also didn't leave a strong impression. I had to look at the site and goal/description to remember.

Maybe some niche data handlers and implementors have use for it. But a Wikimedia project seems overblown for that.

I have not used it though. I'm open to being shown and corrected.

What conclusion did you come to?

He's gonna live a long life. Until we know pi.

I really like Calendar Versioning CalVer.

Gives so much more meaning to version numbers. Immediately obvious how old, and from when.

Nobody knows when Firefox 97 released. If it were 22.2 you'd know it's from February 2022.

It doesn't conflict with semver either. You can use y.M.<release>. (I would prefer using yy.MM. but leading 0 is not semver.)

This is not a supply chain attack, it is sudden extreme enshitification. according to the article, the attacker also bought the GitHub repo

I don't see how buying the GitHub repo as well makes it not a supply chain attack but enshitification.

They bought into the supply chain. It's a supply chain attack.

Ribbit & Rattle Devlog Time - With an Adventure Time Themed Intro

YouTube Video

Click to view this content.

That intro though.

Where would/should the mapping happen? Probably not the Set constructor. JSON.parseSet()?

JSON.parseSet = json => new Set(JSON.parse(json));

JSON.parseSet('["A", "B", "C", "A", "B"]'); // Set(3) [ "A", "B", "C" ]

/edit: JSON.parseMap()

JSON.parseMap = json => new Map(Object.entries(JSON.parse(json)));

JSON.parseMap('{"a":1,"b": 2}'); // Map { a → 1, b → 2 }

In my Firefox I get a NS_BINDING_ABORTED error on the Google Fonts font request.

And they didn't specify a font fallback, only their external web font. It would have worked if they had added monospace as a fallback.

Ignoring secondary email addresses, what was my primary [onlineaccount] E-Mail address has changed four times.

No readily-compilable project is still a worthwhile barrier. So I don't think it's a bad argument.

If it's about open-source licenses, it typically allows that kind of repackaging. Which is not the case for closed-source/proprietary.

Goofy Godot Animation Celebrating 4.0 Release (2020)

YouTube Video

Click to view this content.

Seems like a valid formalization.

I think a or a few counter-examples would go a long way though.

The "rectangle" probably isn't supposed to be this messy?

generate 32-char-pw -> "Must not be longer than 20" 🤨

generate 32-char-pw -> "you must include a specific special character" 🤨

below 10 characters is truly atrocious - and thankfully rare

Are you saying "don't use a synthetic key, you ain't gonna need it"?

People regularly change email addresses. Listing that as an example is a particularly bad example in my opinion.

While debugging you can now hover over any delegate and get a convenient Go to source link, making it easier to navigate to underlying code.

> When you pause while debugging, you can hover over any delegate and get a convenient go to source link, here is an example with a Func delegate.

If you already know about delegates, there's not a lot of content in this dev blog post. Not that that's necessarily a bad thing either.

Explore opportunities to refactor your C# code with default lambda parameters, a new feature in C# 12.

many2one: so in this relationship you will have more than one record in one table which matches to only one record in another table. something like A <-- B. where (<–) is foreign key relationship. so B will have a column which will be mapped to more than one record of A.

no, the other way around

When B has a foreign key to A, many B records may relate to one A record. That's the many2one part.

The fact that different B records can point to different A records is irrelevant to that.

one2many: same as many2one but instead now the foreign key constrain will look something like A --> B.

It's the same, mirrored. Or mirrored interpretation / representation to be more specific. (No logical change.)

If you had B --> A for many2one, then the foreign key relationship is still B --> A. But if you want to represent it from A perspective, you can say one2many - even though A does not hold the foreign keys.

In relational database schemata, using foreign keys on a column means the definition order is always one to one, and only through querying for the shared id will you identify the many.

many2many: this one is interesting because this relationship doesn’t make use of foreign key directly. to have this relationship between A and B you have to make a third database something like AB_rel. AB_rel will hold values of primary key of A and also primary key of B. so that way we can map those two using AB_rel table.

Notably, we still make use of foreign keys. But because one record does not necessarily have only one FK value we don't store it in a column but have to save it in a separate table.

This association table AB_rel will then hold the foreign keys to both sides.

When something hits you in the face you turn blue. This essentially hits you in the face, and matches that color.

I don't have multi-user library maintenance experience in particular, but

I think a library with multiple users has to have a particular consideration for them.

- Make changes in a well-documented and obvious way

- Each release has a list of categorized changes (and if the lib has multiple concerns or sections, preferably sectioned by them too)

- Each release follows semantic versioning - break existing APIs (specifically obsoletion) only on major

- Preferably mark obsoletion one feature or major release before a removal release

- Consider timing of feature / major version releases so there's plannable time frames for users

- For internal company use, I would consider users close and small-number enough to think about direct feedback channels of needs and concerns and upgrade support (and maybe even pushing for them [at times])

I think "keeping all users in sync" is a hard ask that will likely cause conflict and frustration (on both sides). I don't know your company or project landscape though. Just as a general, most common expectation.

So between your two alternatives, I guess it's more of point 1? I don't think it should be "rapidly develop" though. I'm more thinking doing mindful "isolated" lib development with feedback channels, somewhat predictable planning, and documented release/upgrade changes.

If you're not doing mindful thorough release management, the "saved" effort will likely land elsewhere, and may very well be much higher.

Ubuntu LTS.

It has the option for PPAs when the distro doesn't offer packages or recent package updates but the upstream project does.

It's a well-established and stable distro.

of holding the hammer?

MSTest 3.4 is available. Learn all about the highlighted features and fixes that will make your testing experience always better.

EF Core 8 introduces support for mapping typed arrays of simple values to database columns so the semantics of the mapping can be used in the SQL generated from LINQ queries.

Mapping C# array types to PostgreSQL array columns or other DBMS/DB JSON columns.

We have redesigned the Visual Studio Resource Explorer! Now you can manage all your localizations from a single unified view.

Available and enabled by default from version 17.11 Preview 2 onwards.

New resource explorer additionally supports search, single view across solution, edit multiple files and locales at once, dark mode, string.Format pattern validation, validation and warnings, combined string and media view, grid zoomability

Introducing .NET Smart Components, a set of genuinely useful AI-powered UI components that you can quickly and easily add to .NET apps.

cross-posted from: https://programming.dev/post/11720354

UI Components: Smart Paste, Smart TextArea, Smart ComboBox

Dependency: Azure Cloud

They show an interesting new kind of interactivity. (Not that I, personally, would ever use Azure Cloud for that though.)

Introducing .NET Smart Components, a set of genuinely useful AI-powered UI components that you can quickly and easily add to .NET apps.

UI Components: Smart Paste, Smart TextArea, Smart ComboBox

Dependency: Azure Cloud

They show an interesting new kind of interactivity. (Not that I, personally, would ever use Azure Cloud for that though.)

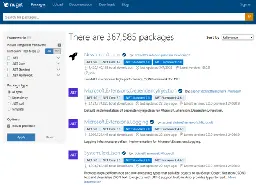

Introducing NuGet.org's Compatible Framework Filters - The NuGet Blog

Last year, we introduced search by target frameworks on NuGet.org, allowing you to filter your search results based on the framework(s) that a package targets. We received plenty of great feedback from you on how to make the filters more effective,

> Backwards compatibility is a key principle in .NET, and this means that packages targeting previous .NET versions, like ‘net6.0’ or ‘net7.0’, are also compatible with ‘net8.0’. […] > > The new “Include compatible frameworks” option we added allows you to flip between filtering by explicit asset frameworks and the larger set of ‘compatible’ frameworks. Filtering by packages’ compatible frameworks now reveals a much larger set of packages for you to choose from.

RTX Remix: Graphically Enhancing Older Games [video demonstration] [2kliksphilip]

YouTube Video

Click to view this content.

Truly astonishing how much generalized modding seems to be possible through general DirectX (8/9) interfaces and official Nvidia provided tooling.

As an AMD graphics card user, it's very unfortunate that RTX/this functionality is proprietary/exclusive Nvidia. The tooling at least. The produced results supposedly should work on other graphics cards too (I didn't find official/upstream docs about it).

For more technical details of how it works, see the GameWorks wiki:

- https://github.com/NVIDIAGameWorks/rtx-remix/wiki

- https://github.com/NVIDIAGameWorks/rtx-remix/wiki/Compatibility

Opus 1.5 Released - achieves audible, non-stuttering talk at 90% packet loss

cross-posted from: https://programming.dev/post/11034601

There's a lot, and specifically a lot of machine learning talk and features in the 1.5 release of Opus - the free and open audio codec.

Audible and continuous (albeit jittery) talk on 90% packet loss is crazy.

Section WebRTC Integration → Samples has an example where you can test out the 90 % packet loss audio.

Opus 1.5 Released - achieves audible, non-stuttering talk at 90% packet loss

There's a lot, and specifically a lot of machine learning talk and features in the 1.5 release of Opus - the free and open audio codec.

Audible and continuous (albeit jittery) talk on 90% packet loss is crazy.

Section WebRTC Integration → Samples has an example where you can test out the 90 % packet loss audio.

Scientists warn of AI collapse - Converging Results (Video)

YouTube Video

Click to view this content.

Balancing convenience against security, and how you can tune the knobs toward more security.

Describes considerations of convenience and security of auto-confirmation while entering a numeric PIN - which leads to information disclosure considerations.

> An attacker can use this behavior to discover the length of the PIN: Try to sign in once with some initial guess like “all ones” and see how many ones can be entered before the system starts validating the PIN. > > Is this a problem?